Studie

Download

Download

Buder, Fabian (2026): AI at Work – A Cross-Generational Perspective on the Leadership Choices That Will Shape the Value of AI at Work. Voices of the Leaders of Tomorrow 2026. Nuremberg Institute for Market Decisions & St. Gallen Symposium.

2026

Voices of the Leaders of Tomorrow 2026

Artificial intelligence (AI) is moving from a technological breakthrough to a common part of business infrastructure. As it becomes embedded in everyday work, the leadership challenge is no longer whether to adopt AI, but how to shape the conditions under which people will work with it, trust it, and benefit from it.

This transformation will be fundamentally human. The more capable AI becomes, the more important it is to define what should remain distinctly human: judgment, responsibility, creativity, and the ability to exercise meaningful control. The real strategic question is not how far AI can go, but what kind of work and what kind of organization leaders want it to create.

This report combines the perspectives of the Leaders of Tomorrow and senior executives to show where expectations already align and where important tensions are emerging. Those differences matter. They reveal not only how future leaders think about AI, but also what kinds of organizations they are likely to trust, join, and help build.

MAIN RESULTS

- AI Strategy Must Start with a Human North Star

- Performance Gains Will Fail Without Legitimacy

- AI Adoption Depends on Enablement, Not Access

- Trust Requires Visible Accountability and Human Control

- AI Productivity Gains Must Be Reinvested in People

1. AI Strategy Must Start with a Human North Star

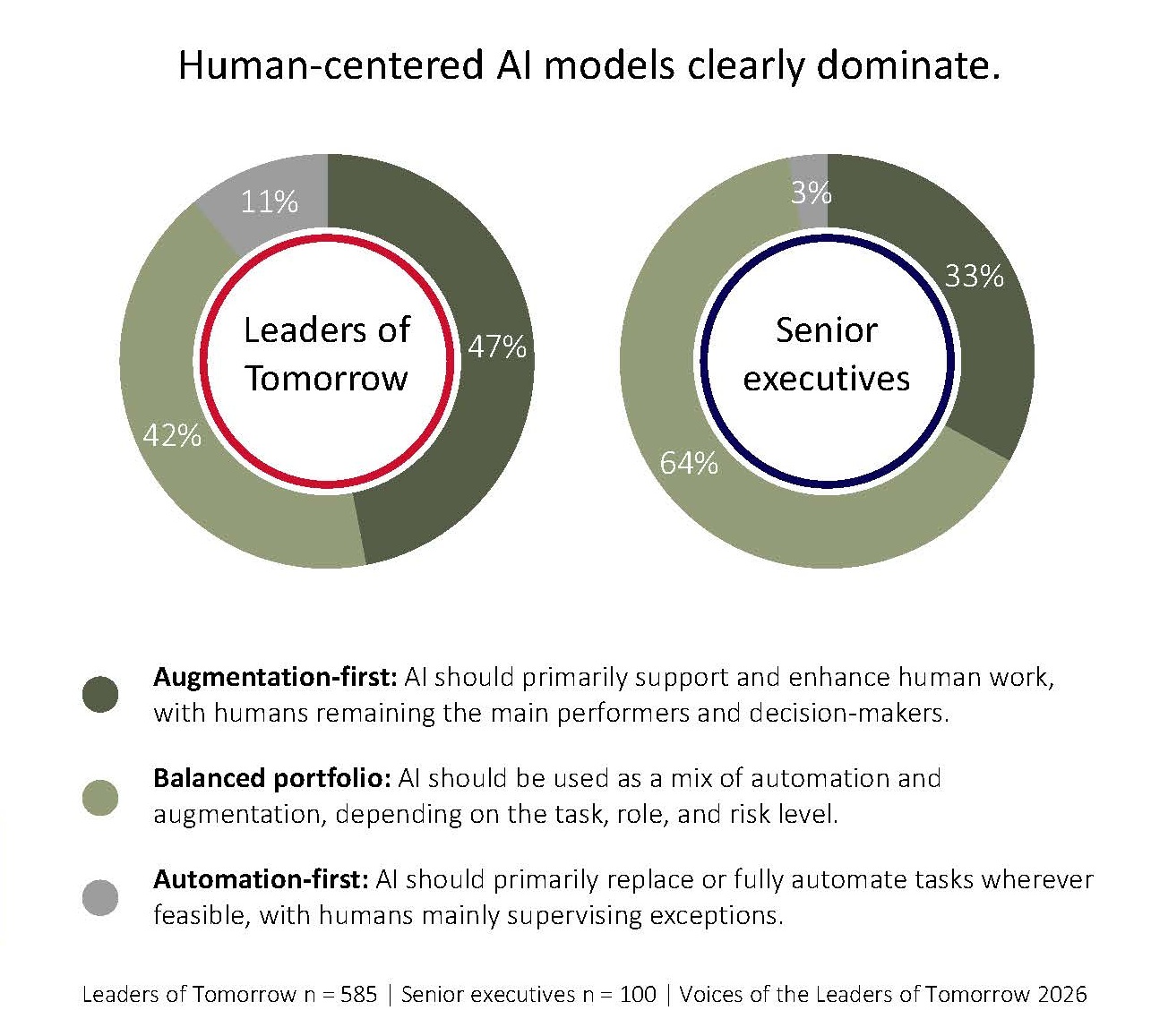

Leaders of Tomorrow see AI’s greatest value in facilitating better decision-making, faster learning, higher-quality output, and more meaningful work for humans. The real strategic choice is not whether to deploy AI, but what human capabilities it is meant to strengthen.

What Leaders of Tomorrow are calling for:

- Define human value (Be explicit about the human value AI is meant to create.)

- Prioritize augmentation (Use AI first to strengthen judgment, learning, quality, and meaningful work.)

- Keep humans accountable (Reserve human judgment where trade-offs are complex or consequences are significant.)

Future leaders want AI to expand human capability, not reduce human relevance. They expect organizations to use AI to strengthen judgment, learning, quality, and meaningful work; keep human judgment central where trade-offs are complex, or consequences are significant; and build that model on clear rules, explainability, and responsible deployment.

2. Performance Gains Will Fail Without Legitimacy

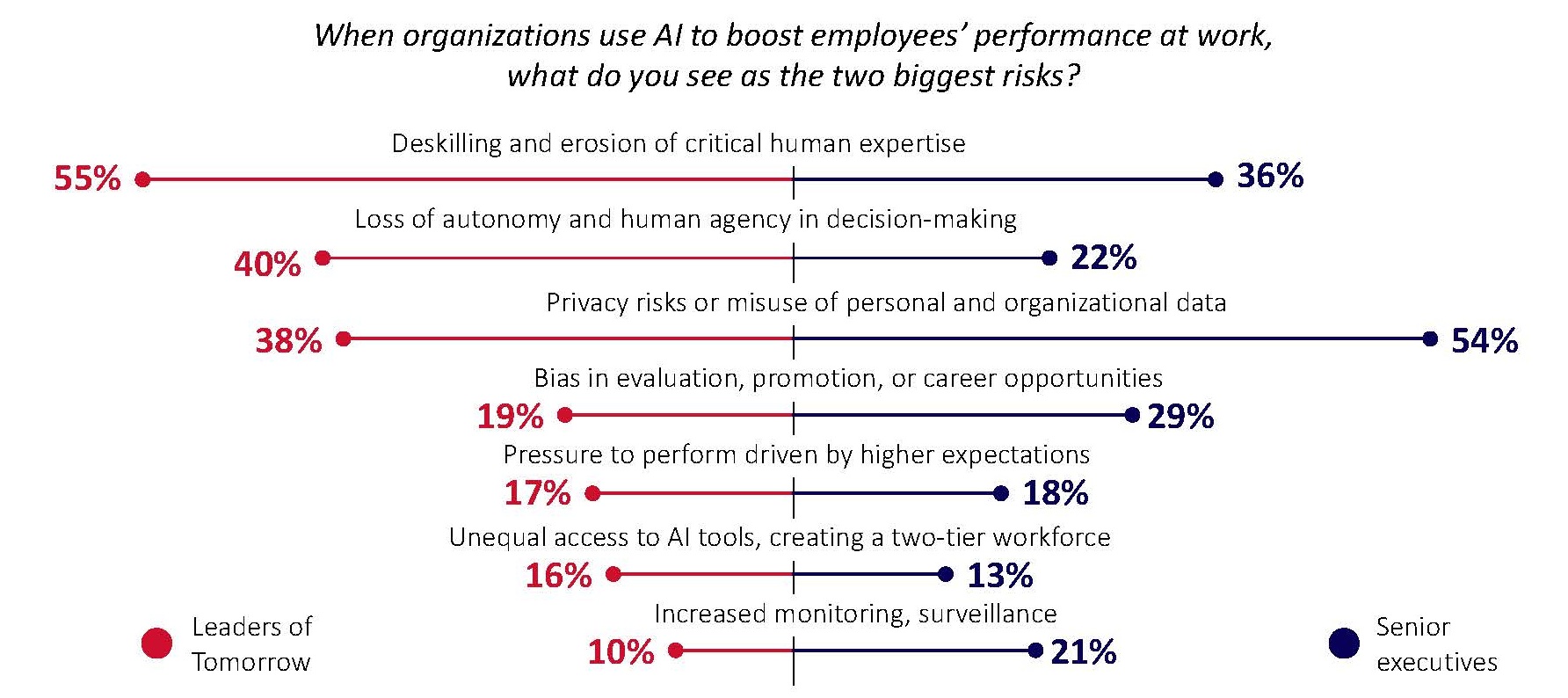

AI is seen as highly valuable in knowledge work, analysis, communication, and routine tasks. However, support weakens when AI erodes human skills, reduces agency, or creates privacy, fairness, and legitimacy concerns.

What Leaders of Tomorrow are calling for:

- Protect expertise (Prevent deskilling and keep critical human skills in active use.)

- Protect agency (Ensure people retain meaningful discretion in how work is done and decisions are made.)

- Set boundaries (Rule out intrusive, unfair, and unaccountable uses of AI.)

Future leaders reject AI models that weaken expertise, reduce human discretion, or expose people to unfair, intrusive, or unchallengeable systems. AI will earn durable support only when performance gains do not come at the cost of skills, agency, and legitimacy at work.

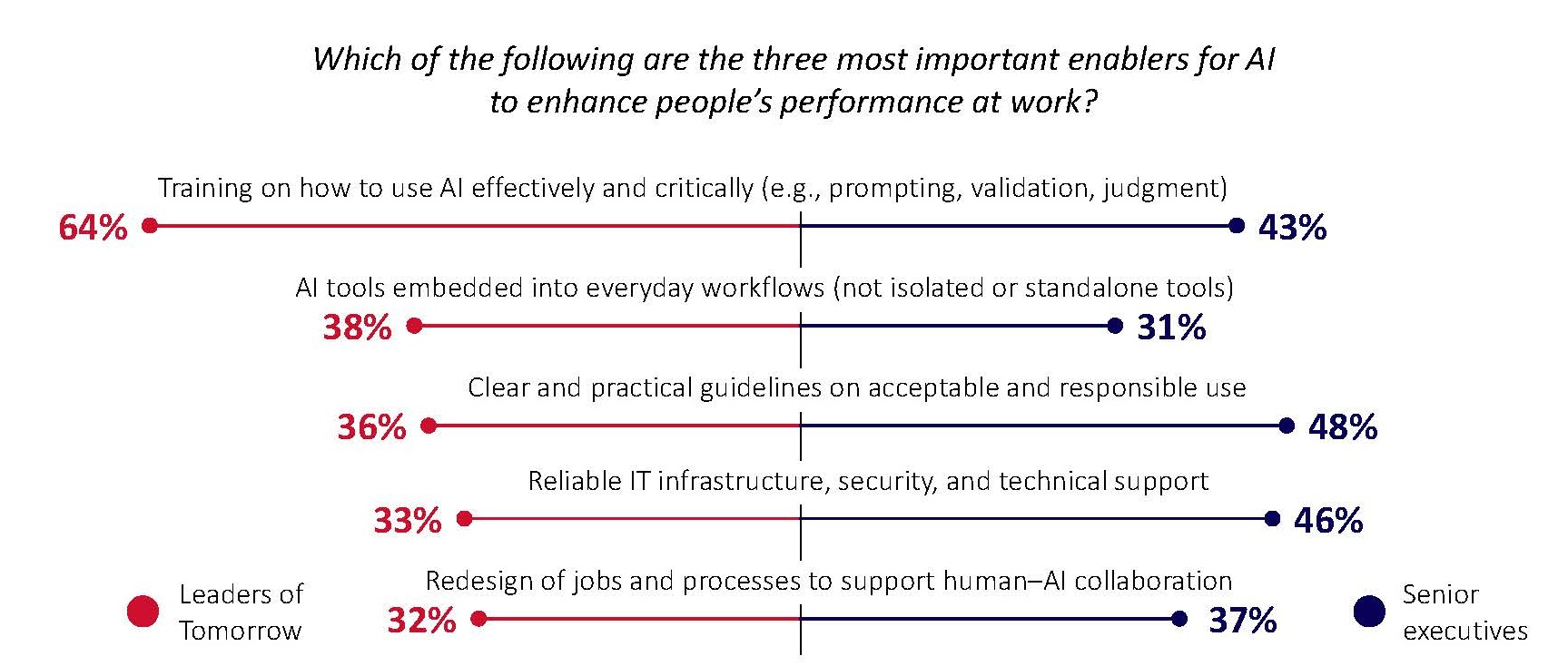

3. AI Adoption Depends on Enablement, Not Access

Training, clear rules, redesigned workflows, and stronger capabilities for reviewing and using AI effectively are what make AI usable at scale. However, many organizations seem to lack the skills, structures, and decision safeguards needed to use them well.

What Leaders of Tomorrow are calling for:

- Build critical AI skills (Train people to use AI effectively, verify outputs, and exercise judgment.)

- Embed AI in workflows (Integrate AI into everyday routines with practical rules and guidance.)

- Clarify review roles (Define who checks outputs, validates decisions, and escalates issues.)

Future leaders do not see AI scaling as a tooling problem. They expect organizations to make AI usable in everyday work by building practical skills, setting clear rules for AI-supported work, and clarifying who reviews outputs, validates decisions, and escalates issues when needed.

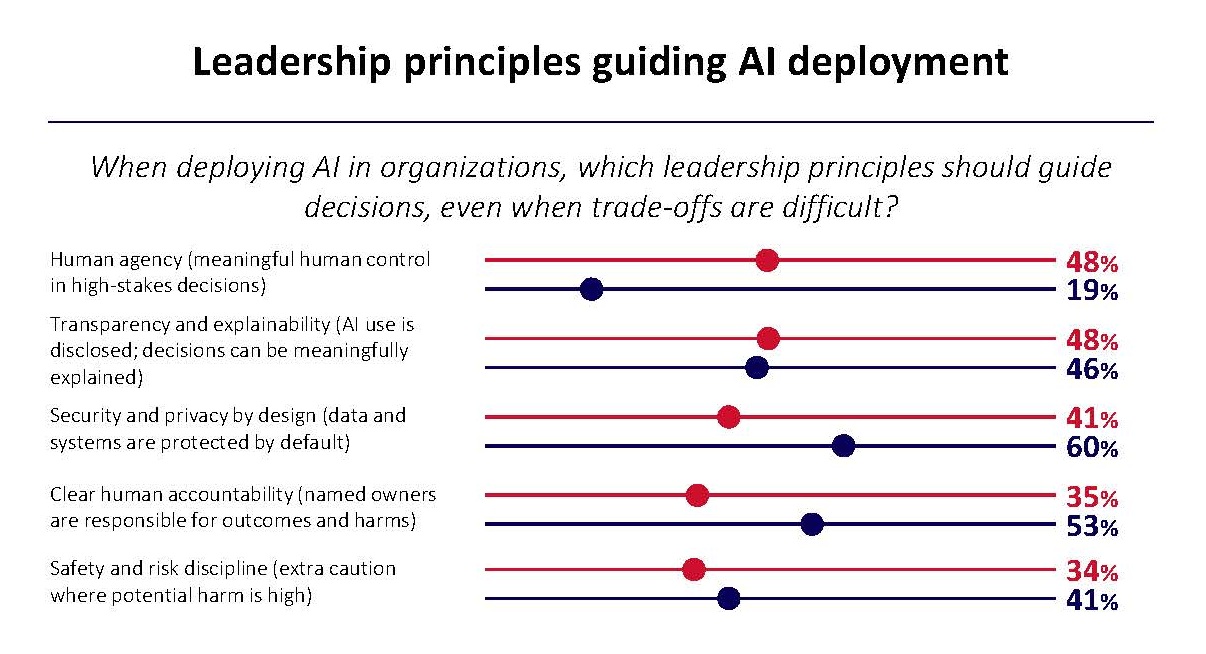

4. Trust Requires Visible Accountability and Human Control

Trust in corporate AI use remains conditional, especially among Leaders of Tomorrow. Trust grows when AI use is transparent, responsibility is clear, and meaningful human control remains in place.

What Leaders of Tomorrow are calling for:

- Disclose AI use (Show clearly where and how AI is used in decisions that affect people.)

- Assign accountable owners (Make named leaders responsible for outcomes and able to intervene when needed.)

- Preserve human override (Ensure important decisions can still be challenged, reversed, or decided by humans.)

Future leaders do not ask for corporations to demonstrate trust in the abstract. They expect organizations to show where AI is used, make clear who is responsible, and preserve human authority wherever decisions affect people in meaningful ways. Trust grows when AI use is visible, responsibility is named, and important decisions can still be challenged or overturned.

5. AI Productivity Gains Must Be Reinvested in People

Whether AI creates lasting value will depend on how productivity gains are used. Leaders of Tomorrow reject a labor-cost logic and expect those gains to be reinvested in people through better work and credible transition support.

What Leaders of Tomorrow are calling for:

- Reinvest gains in people (Use AI gains for better jobs, stronger skills, mobility, and broader employee upside.)

- Take responsibility for transition (Support employees whose roles materially change or disappear with credible transition pathways.)

Future leaders do not treat AI productivity gains as a license for labor reduction. They expect those gains to improve work, expand opportunity, and support people through transition. Social acceptance depends not only on whether AI delivers value, but on whether that value is rein-vested in ways people recognize as fair.

About the Sample

Leaders of Tomorrow

The study was targeted at the Leaders of Tomorrow—a carefully selected global group of highly promising young talent up to 35 years of age, who were invited to challenge, debate, and inspire at the St. Gallen Symposium. For this report, participants were recruited from the following communities:

- St. Gallen Global Essay Competition Participants: International students who competed in the St. Gallen Global Essay Competition were personally invited by the St. Gallen Symposium to take part in the study.

- St. Gallen Symposium Leaders of Tomorrow Community: The St. Gallen Symposium selected participants from their worldwide community of young talents who attended past symposia as Leaders of Tomorrow.

Senior executives

This study also gives voice to a global sample of senior executives (C-suite direct reports), aged 50 and older, working for companies on the Forbes Global 2000 list of the world’s largest corporations. They were recruited and interviewed by Beresford Research on behalf of the Nuremberg Institute for Market Decisions.

Conducting the surveys

Surveys were conducted in January and February 2026. A total of 585 Leaders of Tomorrow participated online, and 100 senior executives were surveyed by phone with screen-sharing to facilitate answering rating questions and overseeing lists of items.

Giving a voice to a unique group of global talent

This survey is not representative in the sense of population sampling. However, we captured a broad and international group of participants that provides a unique snapshot of the opinions of young top talent and top managers around the world.

With active and vocal participants from across the globe, this study offers opinions from a culturally and economically diverse set of contexts, various regions, and both developed and emerging or developing economies. By comparing the perspectives of Leaders of Tomorrow and senior executives, the report helps leaders understand what future expectations around AI at work will mean for trust, capability, and organizational design.

Autorinnen und Autoren

- Dr. Fabian Buder, Head of Marketing & Consumer Behavior, NIM, fabian.buder@nim.org

Kontakt