Arora, N., Chakraborty, I. & Nishimura, Y. (2026). Human-AI Hybrids: The New Power in Marketing Research. NIM Marketing Intelligence Review, 18(1), 36-41. https://doi.org/10.2478/nimmir-2026-0006

Human-AI Hybrids: The New Power in Marketing Research

The marketing research industry is in the middle of a significant transformation, driven by the rapid advancements in generative AI (GenAI) and large language models (LLMs). At a time when the marketing function is investing aggressively in AI areas such as content creation and personalization, our research investigates how LLMs serve as valuable collaborators to generate consumer insights. Our central idea is to create powerful “AI–human hybrids,” which reshape how companies understand their customers, develop products and craft marketing strategies.

The AI promise in the insight generation process

In a reimagined research pipeline, the human researcher remains essential, acting as a supervisor to guide the AI, validate its outputs, and handle ethically sensitive or novel contexts where AI may falter. By embracing this partnership with LLMs, companies can uncover a new level of agility and depth in their quest for consumer understanding, making the insight generation process more efficient and effective.

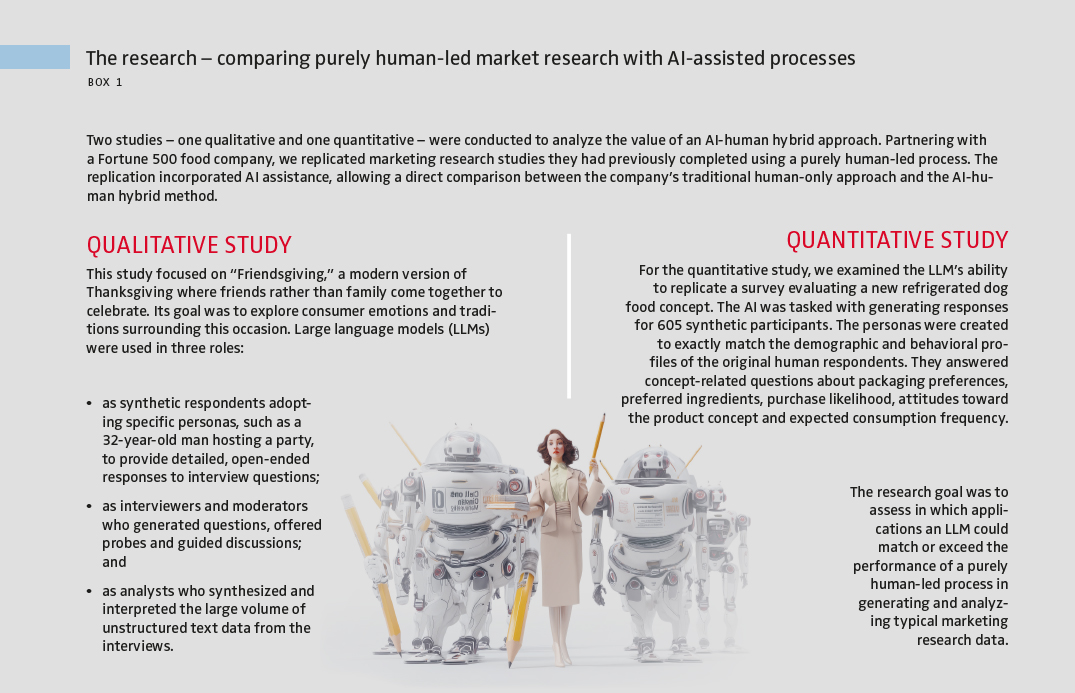

In our research, we analyze how AI-human hybrids can accelerate the marketing research process, reduce costs and uncover deeper insights (see Box 1). The results from two studies, where we replicate human-only market research projects, demonstrate that LLMs can serve as effective assistants at each stage of the research pipeline, from developing the research design to obtaining data, analyzing it and delivering insights that drive business decisions. We also test a novel application of LLMs – their ability to generate “synthetic respondents” – AI-powered personas that can participate in in-depth interviews or answer surveys.

Evaluation of the human–AI hybrid in qualitative research

In our qualitative study on Friendsgiving, the AI integration worked very well in all phases of the project.

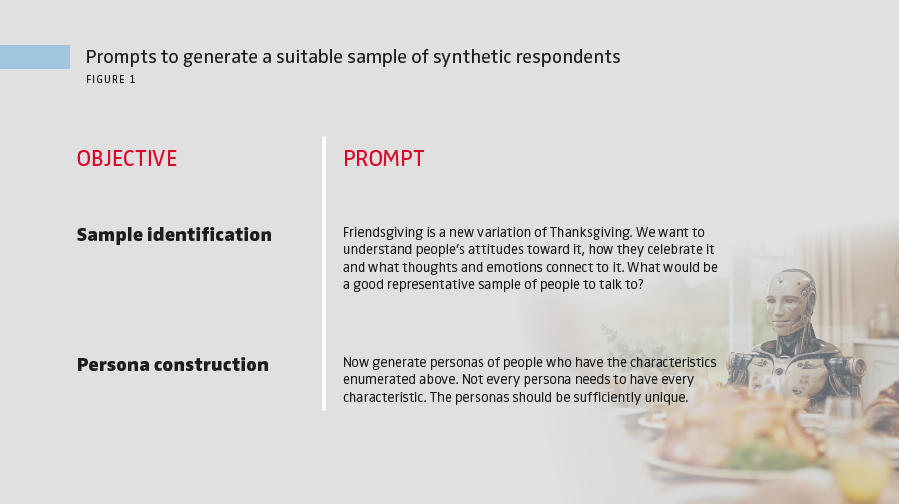

> Respondent generation – building the silicon sample

On the data generation front, we find that LLMs can effectively create the required characteristics for the sample to be interviewed. They succeeded in generating synthetic respondents that matched the characteristics of the original sample. Beyond replicating the respondent profiles in the original study, we asked the LLM to suggest a suitable group of respondents to generate ideas on the research topic of Friendsgiving, using the prompts in Figure 1. In response to the first prompt, the LLM produced a rich description of potential respondents. Although the original study had good variation in terms of respondent demographics such as gender, age and ethnicity, the LLM came up with additional dimensions, suggesting people with dietary restrictions or minorities such as LGBTQ+, expatriates and international students – all groups that may have particular interest in a more inclusive, modern Thanksgiving celebration. In response to the second prompt, the LLM generated 10 unique synthetic personas to match the suggestions, building an improved synthetic sample.

> Data collection – asking, responding, scoring and probing

In the next step, the LLM conducted and moderated the in-depth interviews. The LLM can be prompted to simply respond to the questions from the discussion guide, providing synthetic answers. In addition, it can also be prompted to improve the depth, richness and consistency of the answers. As a “scorer,” an LLM can further evaluate its own answers against key research objectives and metrics like clarity and depth and provide a score. In its role as a “prober,” it can ask the synthetic respondent to elaborate further if the evaluation score falls below a certain threshold. These additional roles are excellent examples of novel ways in which an LLM can add value during data generation. In our study, we let human evaluators compare the answers from the original study and the replicated study and applied several statistical measures. The LLM-generated responses were superior to human responses in terms of both depth and insightfulness, thus offering effectiveness gains.

> Data analysis – insight generation and reporting

On the analysis front, the LLMs performed as well as analysts, matching human experts in identifying key ideas, grouping them into themes and summarizing information. Although LLMs missed some themes that humans detected, they also generated new ones that humans did not.In unserer qualitativen Studie zu „Friendsgiving” funktionierte die KI-Integration in allen Phasen des Projekts sehr gut.

With data analysis out of the way, researchers can focus their expert time on interpreting the findings and distilling insights.

Evaluation of human-AI hybrids for survey research

In a quantitative setting, the LLMS – prompted in similar ways – created synthetic respondents that answered the survey questions successfully. However, the results from the basic LLM exhibited mixed success. Although the LLM correctly captured the direction and magnitude of consumer attitudes, it showed two key weaknesses found in many basic AI applications. First, the responses lacked heterogeneity; there was less variation in the AI’s answers compared to the human data. Second, the LLM answers lacked the internal consistency found in human answers; for example, the AI’s answers didn’t rate attributes such as “healthy ingredients” and “safest food” similarly, as humans do. To address these limitations of the basic LLM model, we tested two effective techniques to incorporate context and improve the quality of synthetic survey data.

> Providing response history

This simple technique involves providing the LLM with its own previous answers within the same survey as context for subsequent questions. These simple reminders to the AI of its previous answers ensured answer coherence across questions within a survey.

> Enriching the LLM’s contextual knowledge

We enhanced an LLM by connecting it to external knowledge using a technique called retrieval-augmented generation (RAG). In our research, we gave the LLM access to the company’s existing qualitative interview transcripts about premium pet food as external knowledge before it answered the survey questions. This simple addition via RAG allowed the LLM to ground its responses in relevant consumer knowledge. Both techniques significantly improved the diversity and internal consistency of the LLM answers, making them more closely resemble human responses.

How to integrate AI into a research project

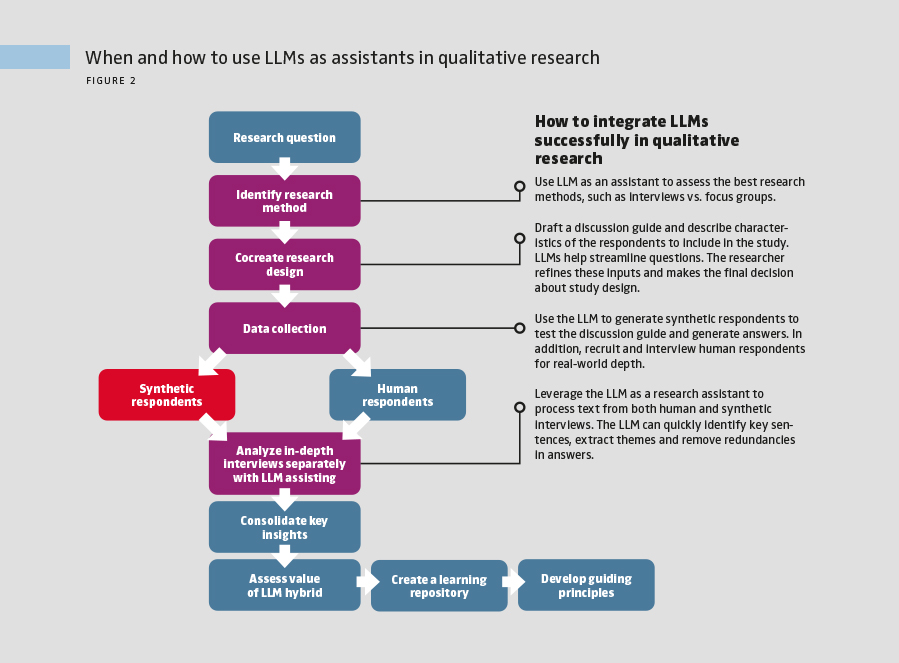

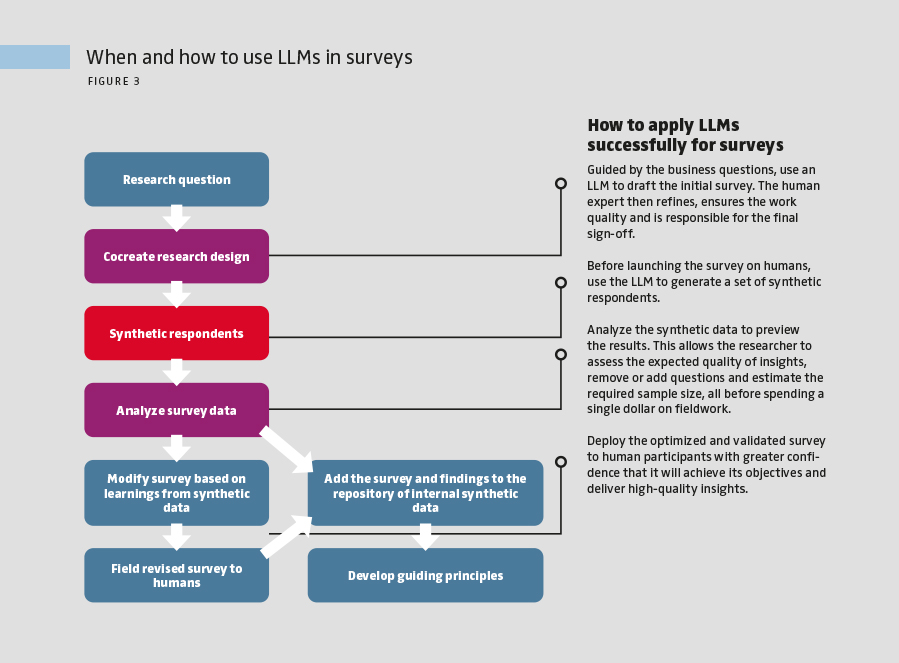

Figures 2 and 3 outline roadmaps and concrete suggestions to incorporate AI into qualitative and quantitative research projects. In both figures, the white, dark gray and light gray blocks represent human-only, LLM-only and human-AI hybrid processes, respectively.

These LLM-assisted research processes improve the efficiency and speed of insight generation and the quality of analyses. With data analysis out of the way, researchers can focus their expert time on interpreting the findings and distilling insights. This approach synthesizes complementary insights from synthetic and human data to formulate recommendations that drive business decisions. The reimagined processes allow the researcher to focus on their study’s ability to answer business questions rather than accomplish repetitive tasks. Human experts can create internal learning repositories by saving both human and synthetic data. Comparing these datasets over time helps create institutional knowledge on business contexts when LLMs are most effective and develop guiding principles for future use.

The future of marketing research is human-AI hybrids

The verdict from our research is unambiguous. AI–human hybrids outperform either side working alone. In qualitative studies, they deliver richer and more meaningful insights. In quantitative surveys, they provide a fast and cost-effective way to visualize the results of a survey before investing in a full-scale project. For managers, the path forward is to start experimenting with human-AI hybrids now and treat AI as a high-impact business partner. This approach not only reduces costs and shortens timelines but also drives better decision-making and sharper strategic insights. At the same time, marketing research leaders must recognize that AI systems can mirror gender, racial and cultural biases present in their training data. Building awareness of these risks and ensuring human oversight in the research process are critical to maintaining accuracy, fairness and trust. In the end, the strongest marketing insights will come from organizations that combine the speed of AI with the discernment of human expertise.

FURTHER READINGS

Arora, N., Chakraborty, I., & Nishimura, Y. (2025). AI–human hybrids for marketing research: Leveraging large language models (LLMs) as collaborators. Journal of Marketing, 89(2), 43–70. https://doi.org/10.1177/00222429241276529

Blanchard, S. J., Duani, N., Garvey, A. M., Netzer, O., & Oh, T. T. (2025). New tools, new rules: A practical guide to effective and responsible generative AI use for surveys and experiments in research. Journal of Marketing, 89(6), 119–139. https://doi.org/10.1177/00222429251349882

Korst, J., Puntoni, S., & Toubia, O. (2025, May–June). How Gen AI is transforming market research. Harvard Business Review. Harvard Business Review. https://hbr.org/2025/05/how-gen-ai-is-transforming-market-research

Arora, N., Chakraborty, I. & Nishimura, Y. (2026). Human-AI Hybrids: The New Power in Marketing Research. NIM Marketing Intelligence Review, 18(1), 36-41. https://doi.org/10.2478/nimmir-2026-0006