Download

Download

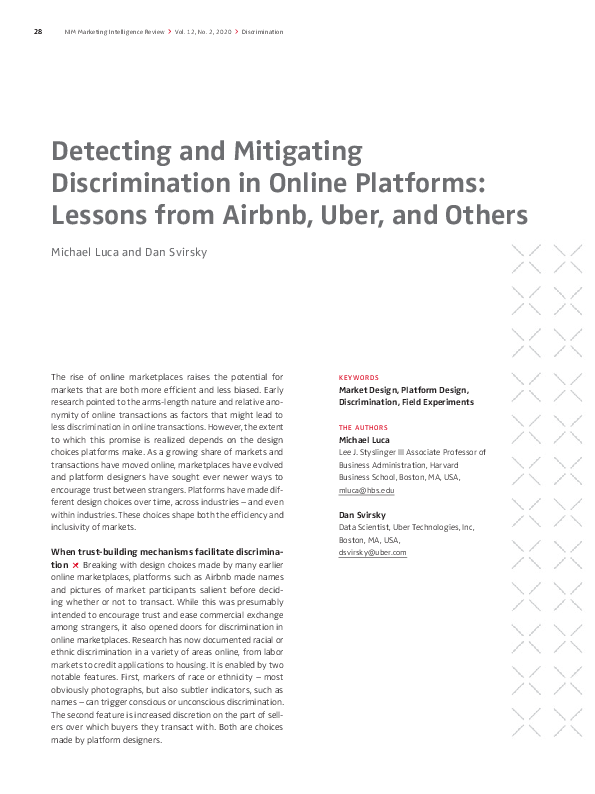

Detecting and Mitigating Discrimination in Online Platforms: Lessons from Airbnb, Uber, and Others

Michael Luca and Dan Svirsky

Research has documented racial or ethnic discrimination in online marketplaces, from labor markets to credit applications to housing. Platforms should therefore investigate how platform design decisions and algorithms can influence the extent of discrimination in a marketplace. By increasing awareness of this issue, managers can proactively address the problem. In many cases, a simple but effective change a platform can make is to withhold potentially sensitive user information, such as race and gender, until after a transaction has been agreed to. Further, platforms can use principles from choice architecture to reduce discrimination. For example, people have a tendency to use whatever option is set as the default. If Airbnb switched, for instance, its default to instant book, requiring hosts to actively opt out of it, the company could reduce the scope for discrimination. It is important that discrimination and possible solutions are discussed transparently.

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/f/f/csm_Die__ce4685c4af.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/4/f/csm_2020_nim_mir_reputation_economy_de_1d05d01c86.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/9/8/csm_moehlmann_teubner_vol_12_no_2_de_103b934fb3.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/d/8/csm_2020_nim_mir_reputation_economy_de3_03ea42de5a.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/c/a/csm_2020_nim_mir_reputation_economy_de4_9623b8eef0.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/8/1/csm_MIR_Reputation_Economy_Interview_d8998007d0.png)

![[Translate to English:] [Translate to English:]](/fileadmin/_processed_/a/c/csm_2020_nim_mir_reputation_economy_de5_579f022f5b.png)